Live video streaming architecture is the backbone of modern OTT platforms such as Hotstar, Netflix Live, YouTube Live, and Prime Video. It enables real-time delivery of video content to millions of users across different locations with minimal buffering and high scalability. Understanding live video streaming architecture is essential for developers, system designers, and engineers working on media platforms.

In this guide, we will explore the end-to-end streaming pipeline, including ingest servers, transcoding, chunking, CDN distribution, DRM security, and user playback workflow.

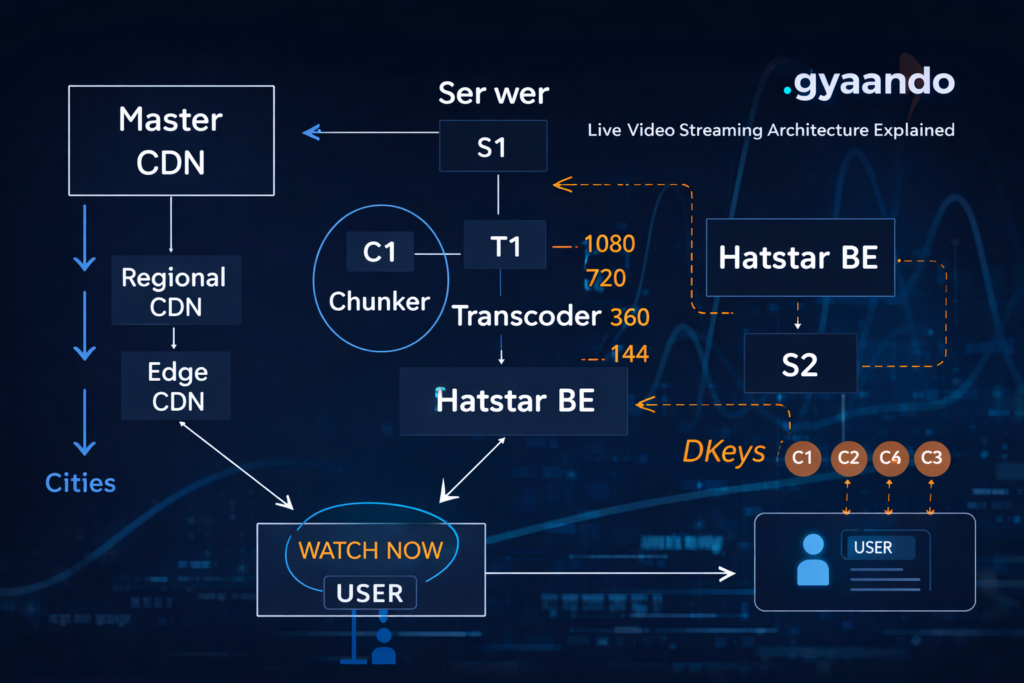

How Live Streaming Works (High-Level Flow)

A typical live streaming pipeline follows these steps:

- Camera or broadcast source generates live feed

- Feed reaches an ingest server

- Transcoder creates multiple quality versions

- Chunker splits video into small segments

- CDN distributes video globally

- Users stream from the nearest edge server

This pipeline ensures scalability and smooth playback across devices and network conditions.

1. Live Feed Ingestion Layer

Role of Ingest Server

The ingest server is the entry point of live streaming systems. It receives the video feed from encoders or broadcasting tools.

Responsibilities

- Accept live stream via RTMP or SRT

- Validate stream quality and format

- Provide buffering before processing

- Trigger encoding and packaging workflows

Common Streaming Protocols

- RTMP (Real-Time Messaging Protocol)

- SRT (Secure Reliable Transport)

- WebRTC (Low latency streaming)

2. Transcoder Layer (Adaptive Bitrate Streaming)

The transcoder is one of the most critical components of live video streaming architecture.

Why Transcoding is Required

Users watch videos on different devices and networks. Transcoding converts a single source video into multiple quality levels.

Example Quality Ladder

- 144p (slow network)

- 360p (mobile viewing)

- 720p (HD)

- 1080p (Full HD)

- 4K (high bandwidth)

This technique is called Adaptive Bitrate Streaming (ABR).

Popular Transcoding Tools

- FFmpeg

- AWS Elemental MediaLive

- Google Transcoder API

External reference: https://aws.amazon.com/media-services/

3. Chunker Layer (Segmented Streaming)

After transcoding, videos are divided into small chunks or segments.

Why Chunking Matters

- Faster buffering

- Seamless quality switching

- Reduced latency

- Improved CDN caching

Typical Chunk Duration

- 2–6 seconds for HLS

- 1–2 seconds for low-latency streaming

Streaming Formats

- HLS (HTTP Live Streaming)

- MPEG-DASH

👉 Internal Link Idea: HLS vs DASH Comparison Guide

4. CDN Distribution Layer

Content Delivery Networks (CDN) ensure efficient global distribution of streaming content.

CDN Hierarchy

- Master CDN (Origin Server)

- Regional CDN

- Edge CDN (Closest to users)

Benefits of CDN

- Reduced latency

- Faster load time

- Lower origin server load

- High availability

Popular CDN Providers

- Cloudflare

- Akamai

- AWS CloudFront

- Fastly

External reference: https://www.cloudflare.com/cdn/

5. Backend & DRM Layer

Streaming platforms rely heavily on backend services and content protection mechanisms.

Backend Responsibilities

- User authentication

- Subscription validation

- Stream manifest generation

- Playback analytics

- Session management

DRM (Digital Rights Management)

DRM prevents unauthorized content distribution.

Popular DRM Systems

- Widevine (Android)

- FairPlay (iOS)

- PlayReady (Windows)

6. User Playback Flow

When a user clicks Watch Now, the following steps occur:

- Player requests manifest file from backend

- Backend validates subscription and returns stream URL

- Player selects nearest CDN edge server

- Video chunks download sequentially

- Adaptive bitrate switches quality automatically

This ensures smooth playback even during network fluctuations.

Low Latency Live Streaming

Low latency streaming is becoming a key requirement for live sports and interactive events.

Technologies Enabling Low Latency

- Low Latency HLS (LL-HLS)

- WebRTC streaming

- Chunked CMAF

Benefits

- Real-time experience

- Reduced delay in live sports

- Interactive live engagement

7. Docker & Kubernetes in Streaming Infrastructure

Modern streaming platforms adopt containerization for scalability and resilience.

Why Containers Are Used

- Microservice architecture

- Easy scaling of encoding pipelines

- Faster deployment

- Improved fault isolation

Use Cases

- Transcoding microservices

- Backend APIs

- Monitoring & analytics

- Stream orchestration

👉 Internal Link Idea: Docker for Video Streaming Architecture

8. Real-World OTT Architecture Example

Large streaming platforms typically implement:

- Multi-region deployments

- Multi-CDN strategy

- AI-driven bitrate optimization

- Real-time monitoring

- Auto-scaling clusters

This allows them to handle massive concurrent viewers without downtime.

Key Benefits of Modern Streaming Architecture

✅ Global scalability

✅ High-quality adaptive streaming

✅ Secure content delivery

✅ Reduced buffering

✅ Reliable user experience

Challenges in Live Streaming Systems

Managing latency

High CDN cost

Encoding resource consumption

DRM complexity

Network congestion handling

Future of Live Video Streaming Architecture

The future of streaming is driven by emerging technologies.

Upcoming Trends

- AI-powered bitrate optimization

- Edge computing streaming

- 5G ultra-low latency delivery

- Serverless media pipelines

- Interactive live commerce streaming

These innovations will further enhance viewer engagement and streaming performance.

Conclusion

Live video streaming architecture combines ingest servers, transcoding pipelines, chunk-based packaging, CDN distribution, and backend orchestration to deliver seamless real-time video experiences. Adaptive bitrate streaming ensures optimal playback across devices, while DRM secures premium content. As technologies like 5G, edge computing, and AI continue to evolve, streaming platforms will become faster, smarter, and more interactive.

For developers and system designers, understanding CDN hierarchy, transcoding workflows, and playback optimization is essential for building scalable OTT platforms.